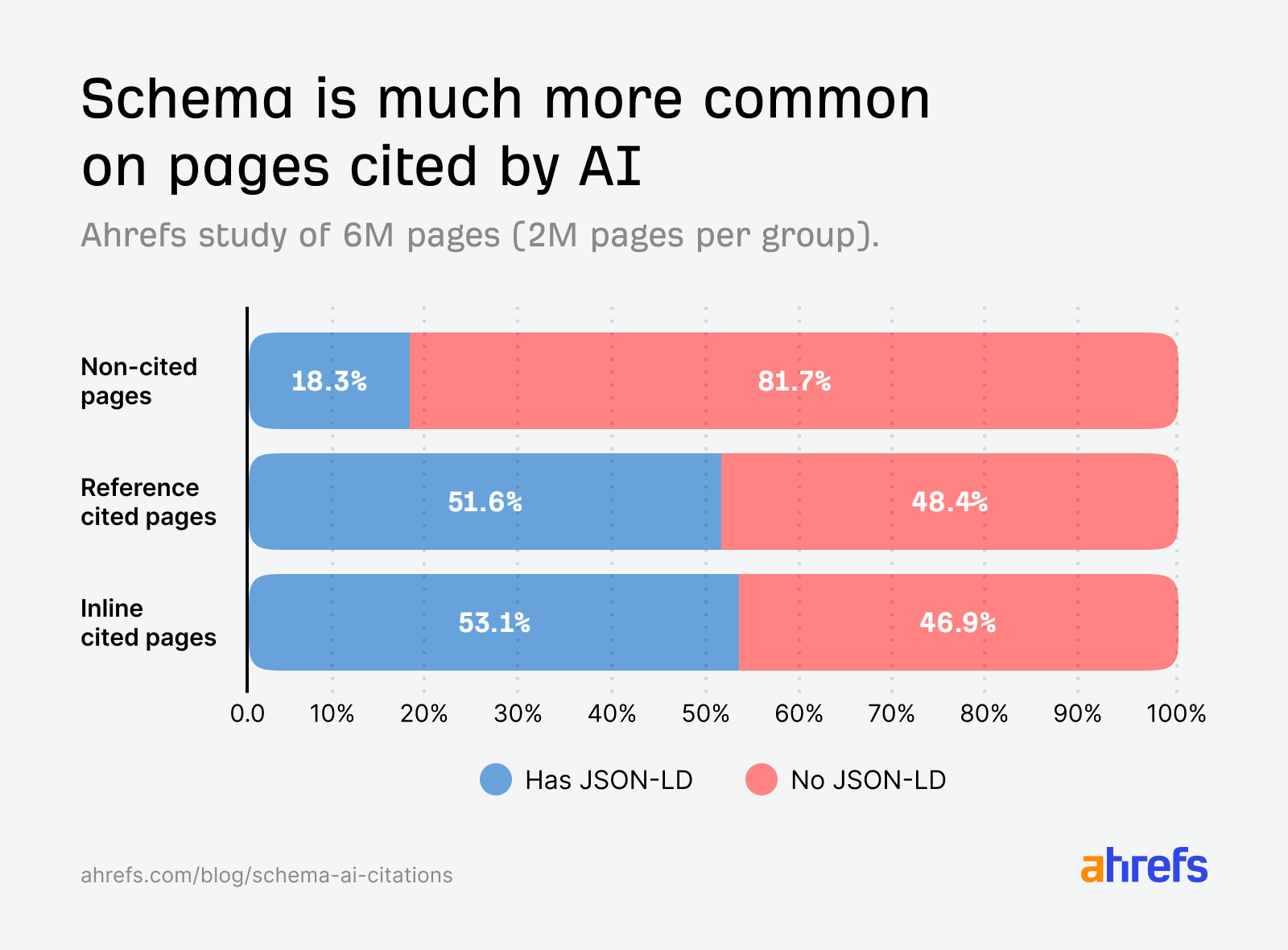

AI cited pages were almost three times more likely to have JSON-LD than non-cited pages.

That’s a big gap, and the kind of stat that gets shared in LinkedIn carousels and conference slides as proof that schema is an AI visibility lever.

But we weren’t satisfied with the data since it could easily have been correlation, not cause.

Schema markup tends to live on better-maintained, more technically sophisticated sites, and those same sites publish stronger content, build more authority, earn more links, and do all the other things that get pages cited.

Schema could be doing real work, but it could also just be riding the wave of every other signal.

So we couldn’t actually answer the question SEOs really care about: if I add schema to my page, will I get cited more by AI?

To find out, we ran a second study designed to isolate the effect of adding schema.

Here’s what we found.

We tracked 1,885 web pages that added JSON-LD schema between August 2025 and March 2026, matched them against 4,000 control pages, and measured citation changes across Google AI Overviews, AI Mode, and ChatGPT.

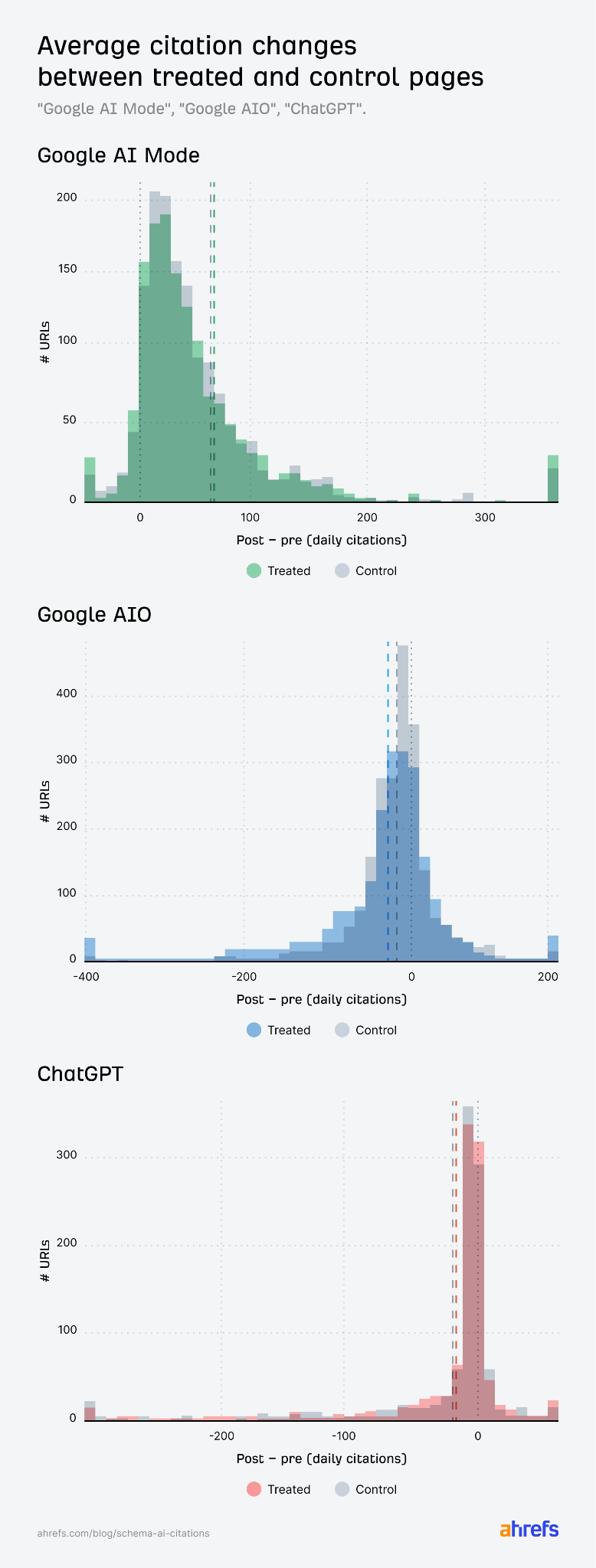

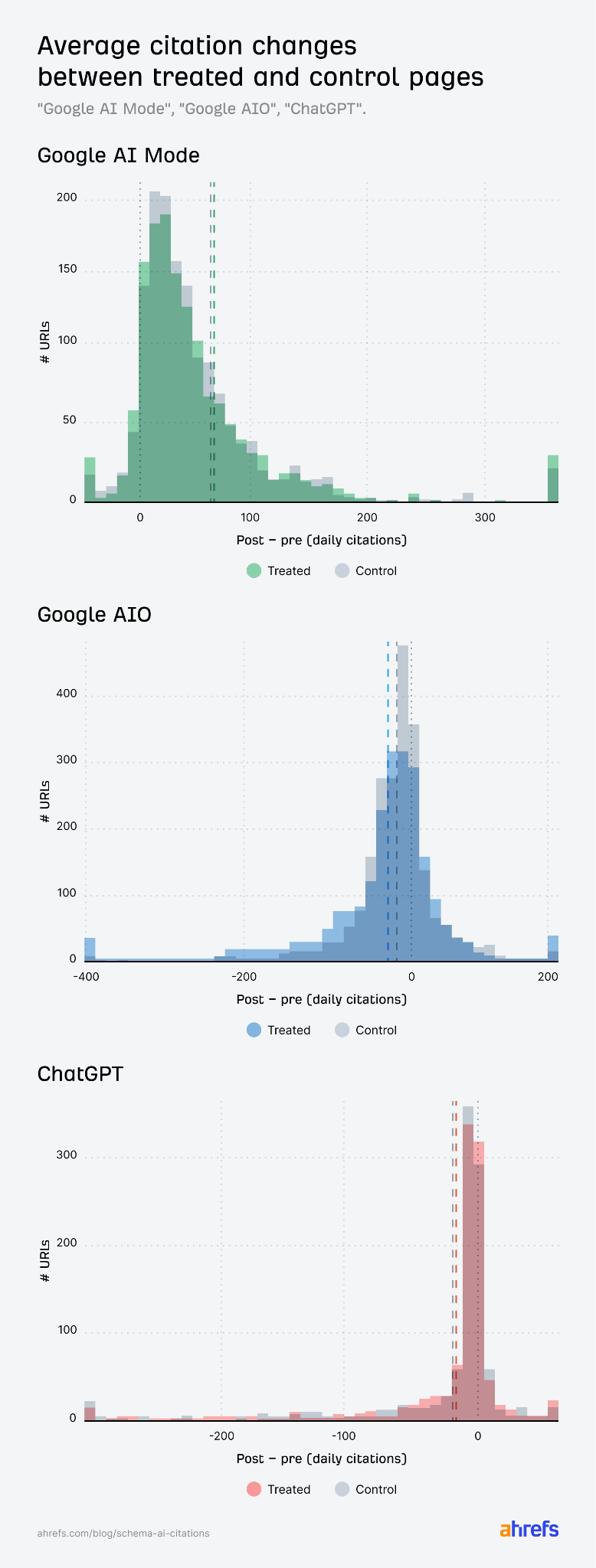

Adding schema produced no major uplift in citations on any platform.

| AI source | Effect on citations | Verdict |

|---|---|---|

| Google AIO | −4.6% | Small but statistically significant decline relative to matched controls; (both groups were declining together, but treated pages fell slightly faster) |

| Google AI Mode | +2.4% | Statistically indistinguishable from zero |

| ChatGPT | +2.2% | Statistically indistinguishable from zero |

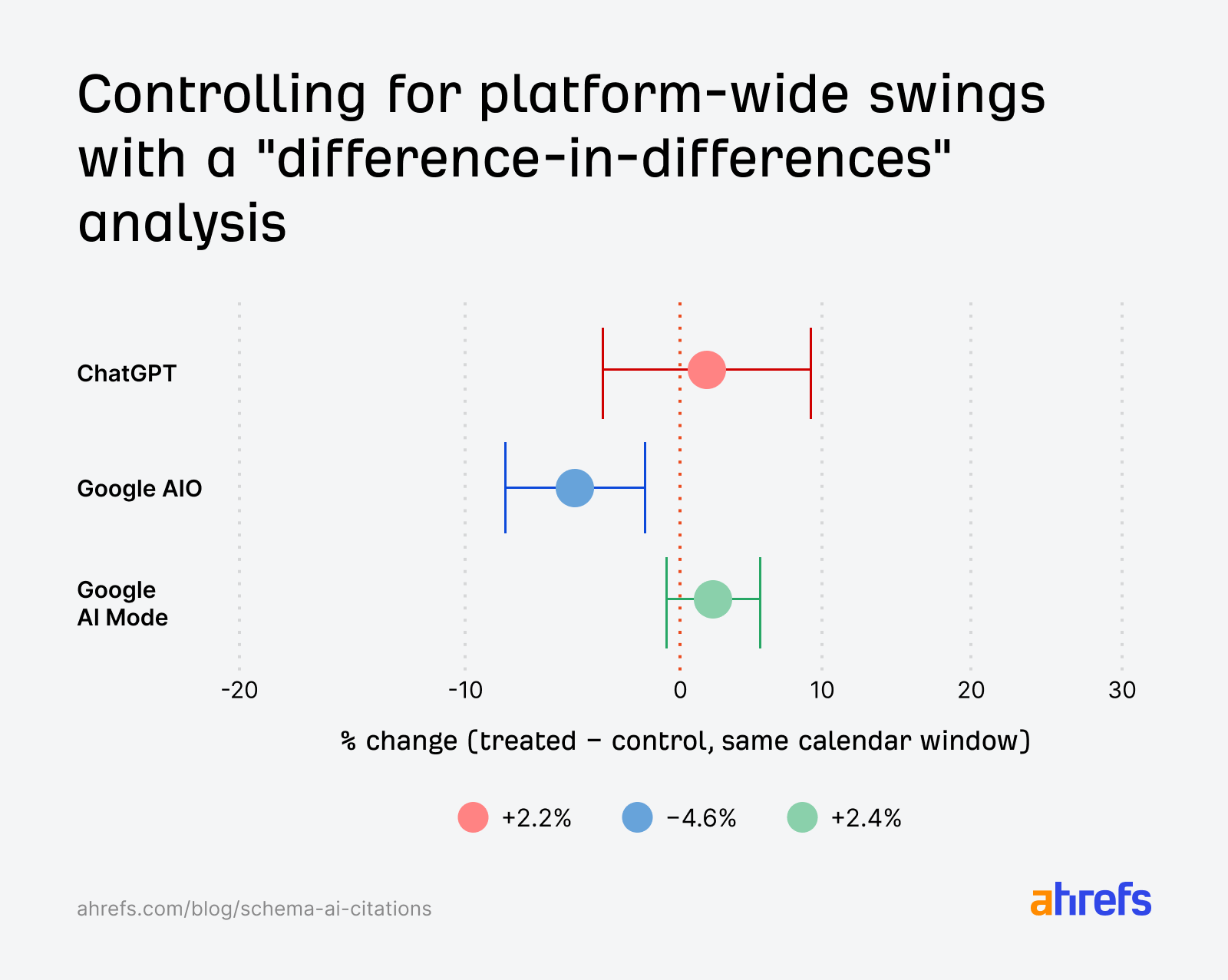

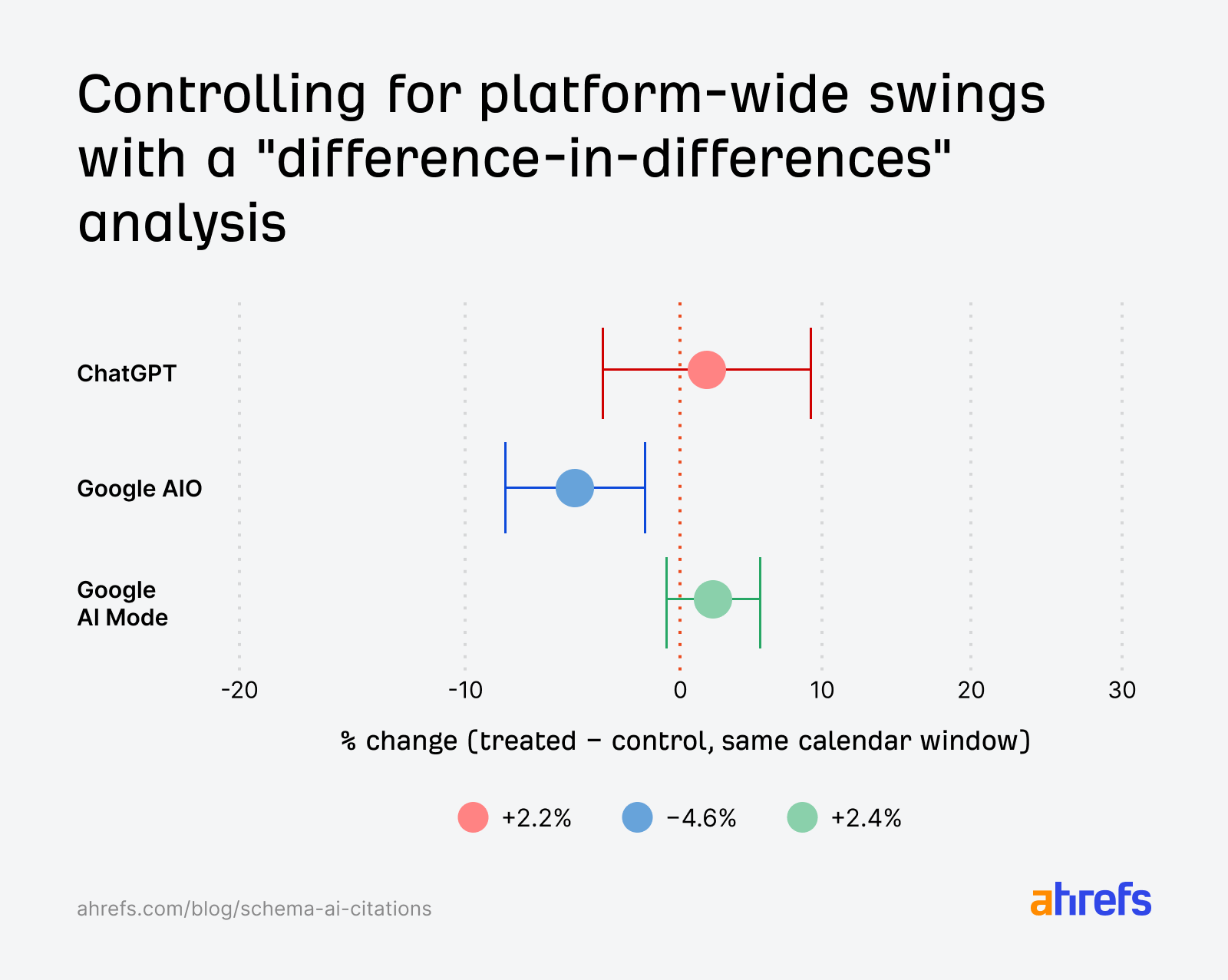

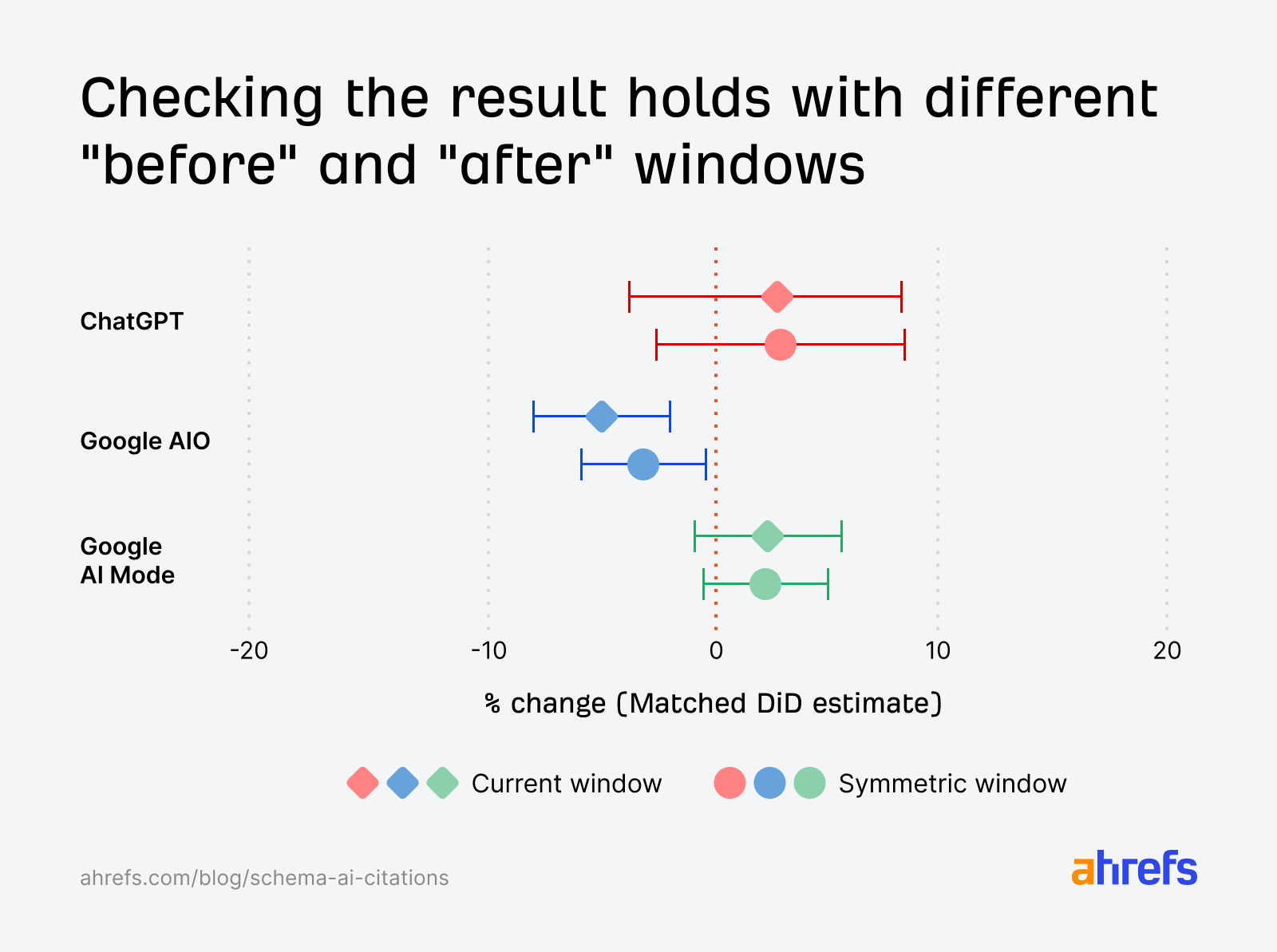

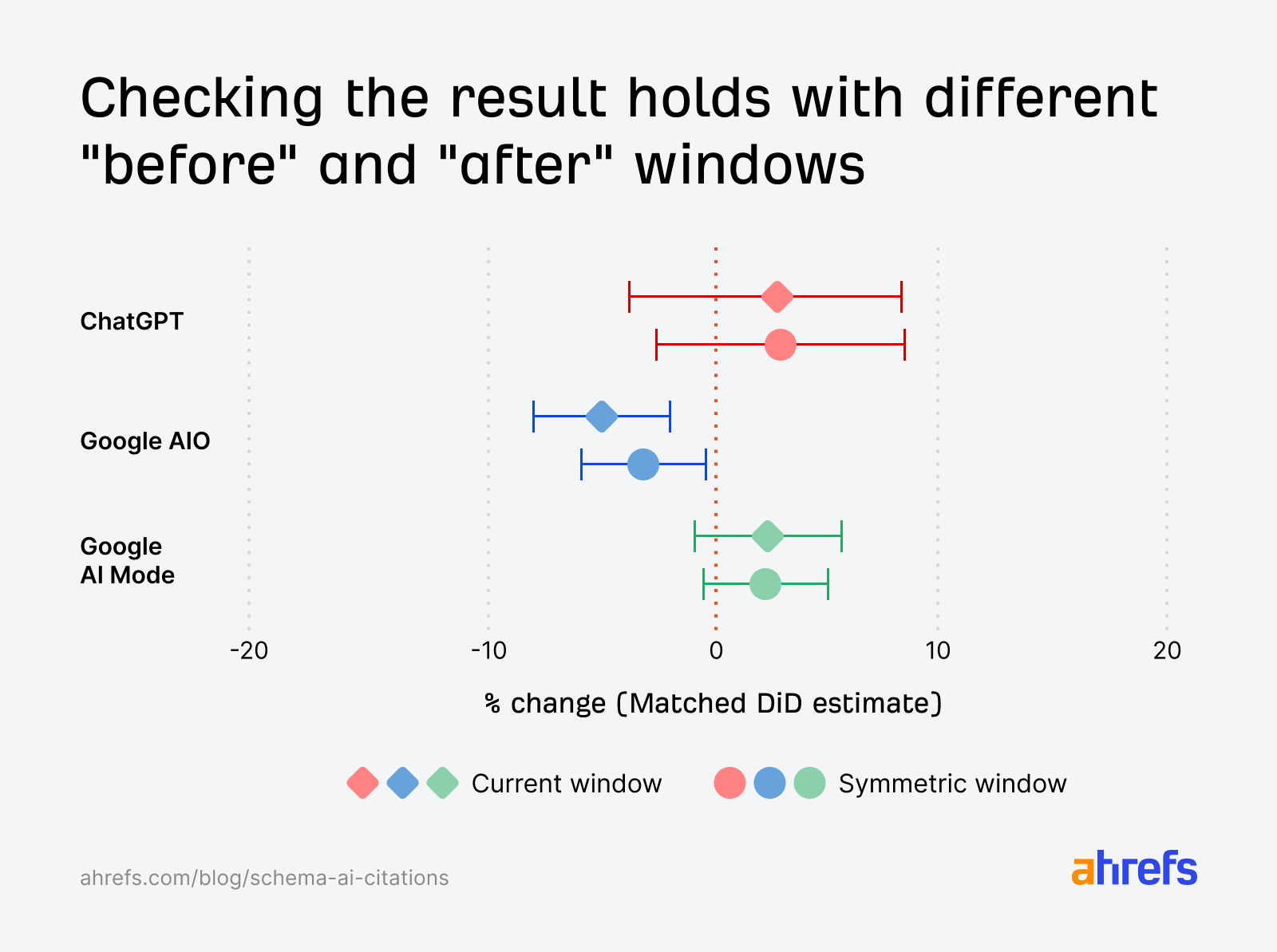

These percentages come from our most reliable analysis (a matched difference-in-differences [DiD] test).

In this test, both AI Mode and ChatGPT treated pages performed slightly better than control pages on average, but the differences are small enough that they could easily be random noise across thousands of URLs.

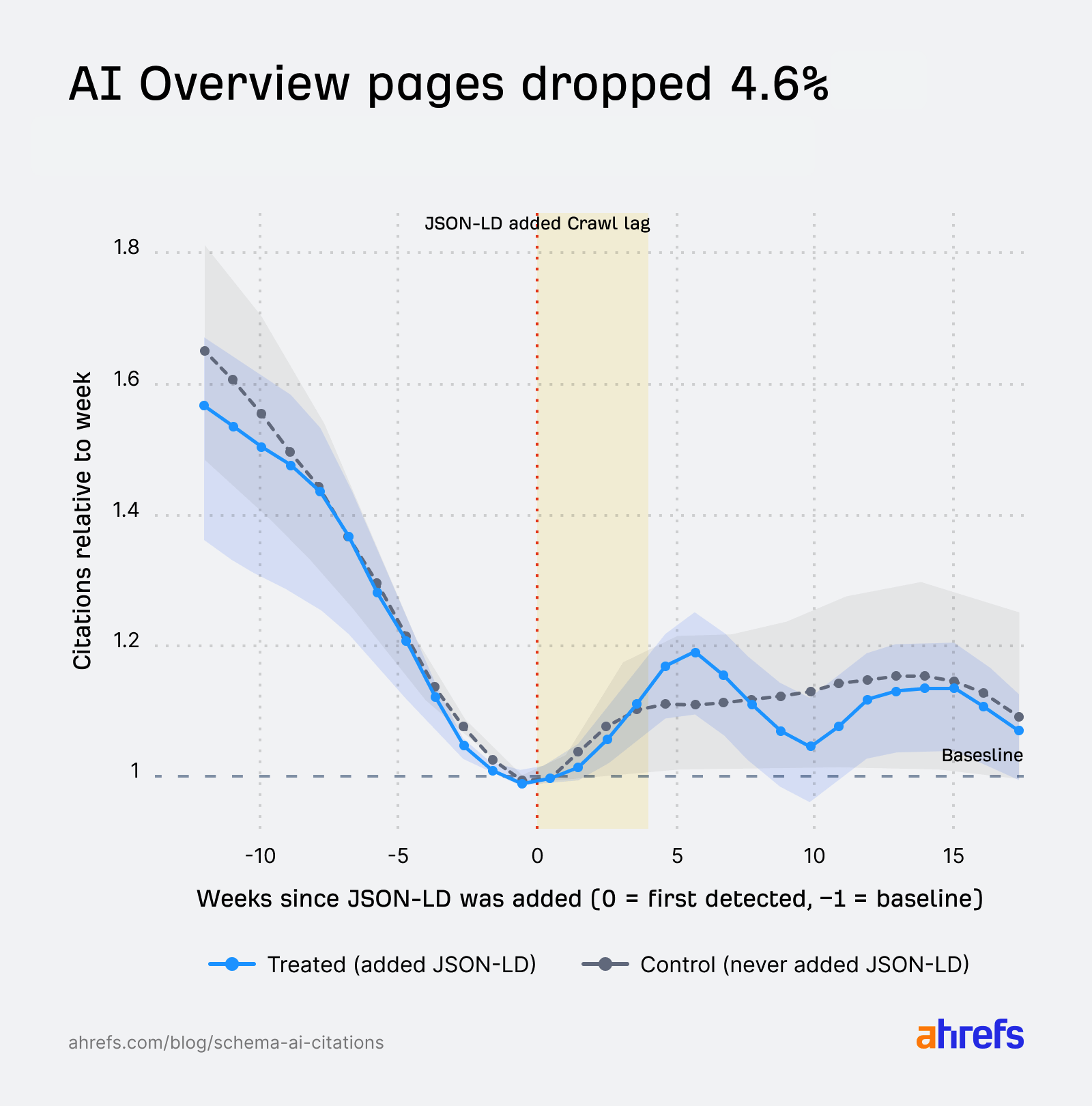

AI Overviews showed a 4.6% decline, which is small but statistically significant relative to matched control pages.

But that isn’t quite the full story—we’ll get into that in the next section.

So, overall, we can’t tell whether the schema did a tiny bit of good or nothing at all.

AI Overview citations on treated pages fell by 4.6% relative to control pages, and the result is “statistically significant” (the odds of seeing a gap this large by pure chance are about 1 in 2,500).

But before anyone reads this as “adding schema hurts your AI Overview citations”, there are two things you need to bear in mind.

- The absolute size is small. We’re talking about an average loss of around 12 daily citations per page, in a sample where most pages were getting hundreds.

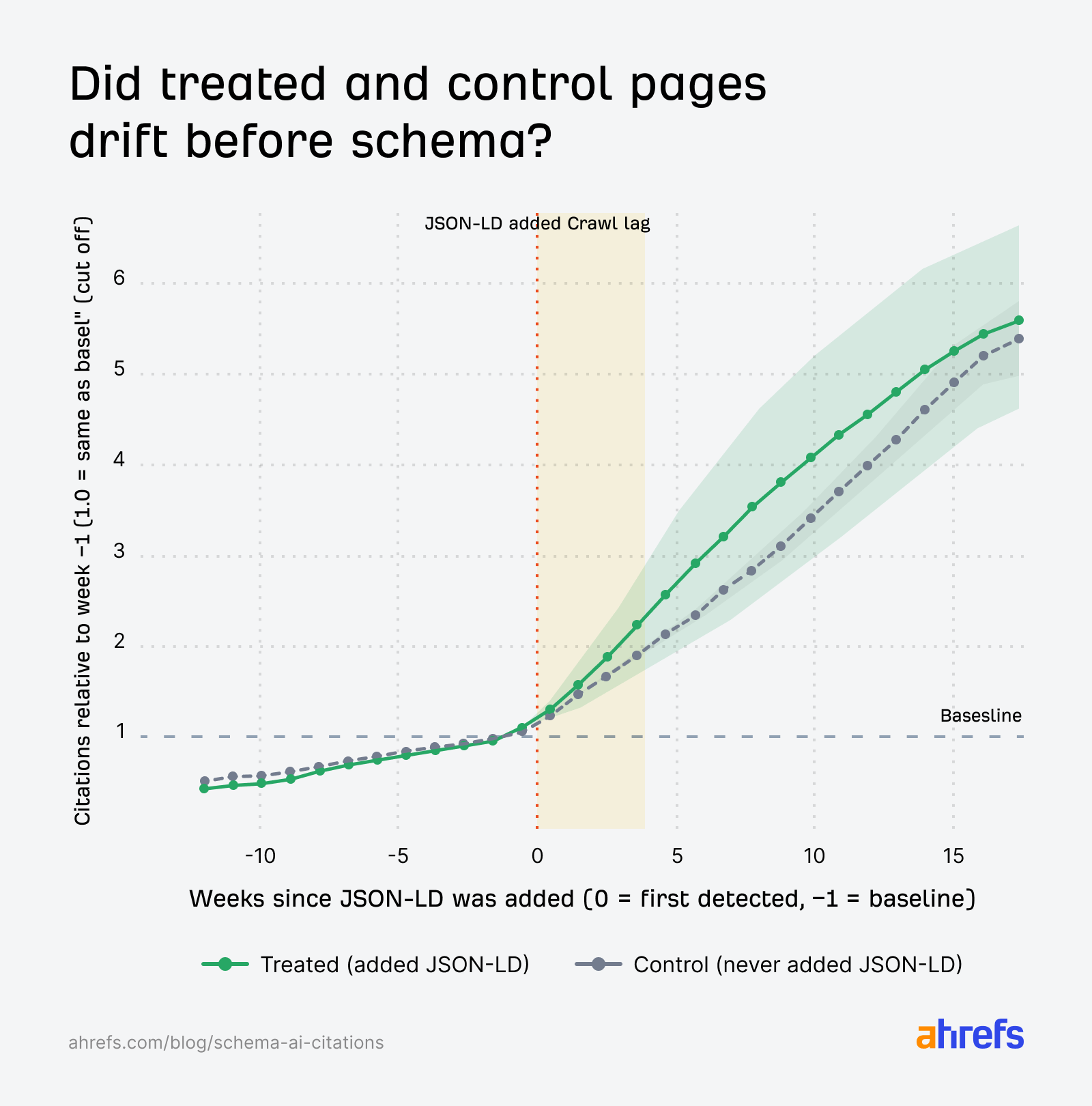

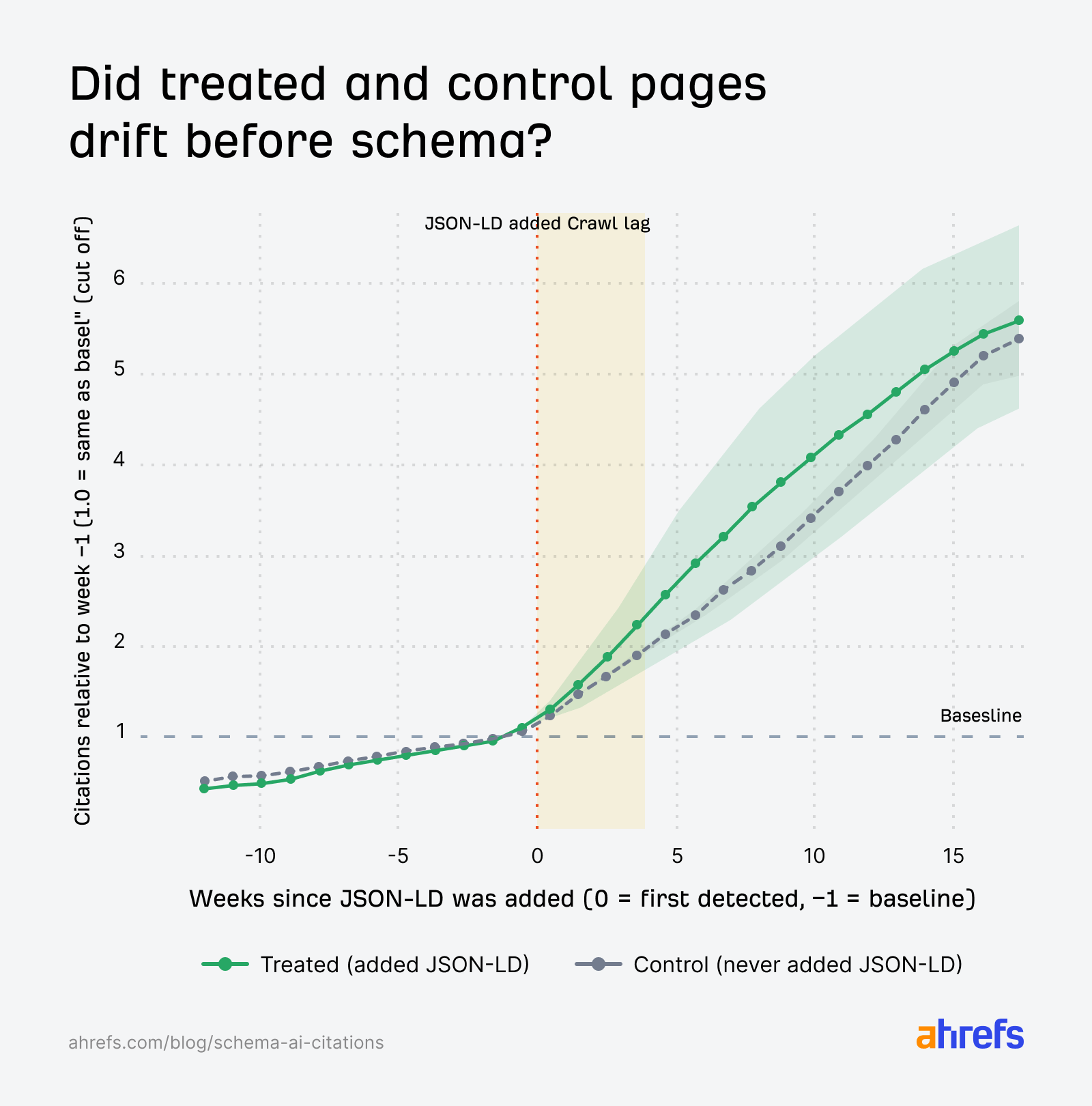

- Both treated and matched control pages were already on a steep downward trajectory before schema was added—the kind of decline you’d expect from AI Overviews pulling back from these specific types of content for reasons unrelated to schema (e.g. a Google update changing what gets surfaced, the content getting stale, or Google not having recrawled the page recently).

Sidenote.

How to read this chart: both lines are anchored to 1.0 at week −1 (the week before schema was added), so they always start at the same point by design. Before treatment, both groups decline together. After treatment, treated pages sit slightly below the matched controls (this is the −4.6% gap).

That said, if adding schema had no effect on citations either way, we’d expect treated pages and matched controls to decline together at the same rate (which is broadly what we see for AI Mode and ChatGPT).

The fact that treated pages declined slightly more suggests schema had a small negative effect—but it could also reflect other factors.

We can’t tell which one it is from this data alone.

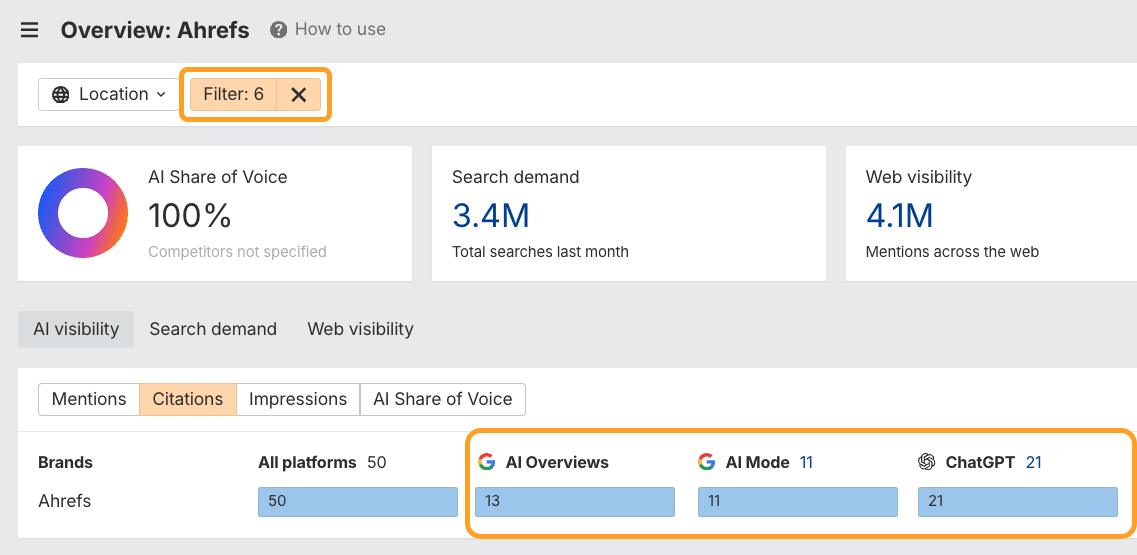

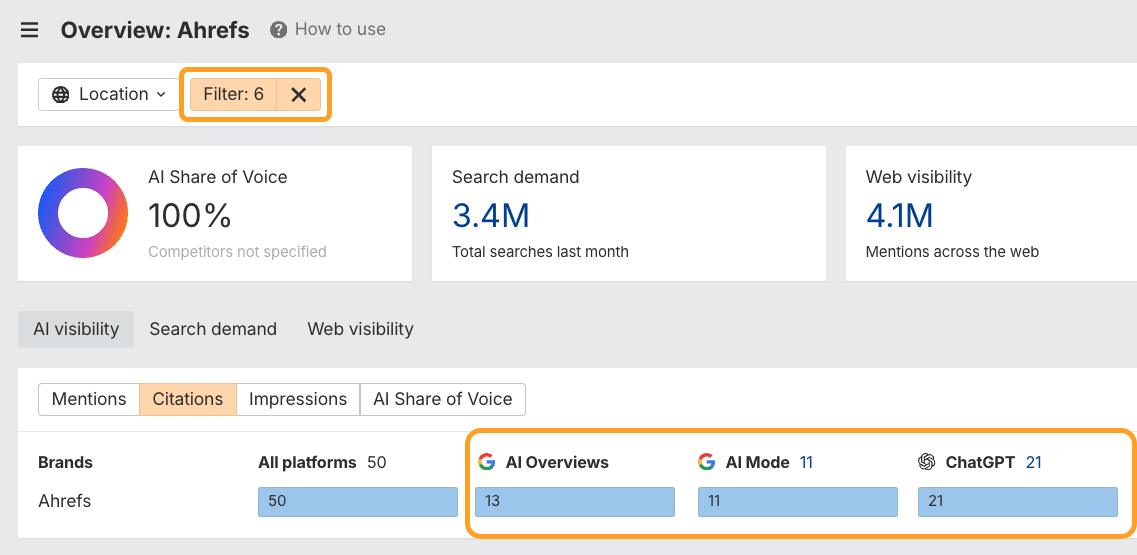

Using Brand Radar, Xibeijia pulled a few million URLs cited in AI Overviews.

She then retrieved the HTML history from our crawler database, labeled whether each URL contained , and spotted the date that schema presence transitioned from “False” to “True”.

This left her with 1,885 pages that introduced JSON-LD between August 2025 and March 2026.

Finally, to analyze all of that data, she used Agent A, our new AI marketing agent.

For each page Xibieijia knew two key dates:

For each page Xibieijia knew two key dates:

- The last day our crawler checked the page and found no JSON-LD

- The first day our crawler detected JSON-LD on the page

Where schema might still matter: pages not yet cited by AI

There’s one important thing you need to know about this data: we studied pages that were already being cited heavily by AI. Every page in the dataset had 100+ AI Overview citations in February 2025, before any schema was added. These pages were already inside the consideration set, being crawled and surfaced by LLMs. If a page is already getting picked up, our data suggests that adding schema isn’t going to push it higher. But for pages that aren’t being seen by AI systems at all, schema markup might still play a role in helping them get crawled, parsed, or indexed in the first place. Our study can’t speak to that directly, but a recent experiment from searchVIU answers a related question. They tested whether five major AI systems (ChatGPT, Claude, Perplexity, Gemini, and Google AI Mode) actually used schema markup when fetching a page in real-time. Spoiler: none of them did. During direct retrieval, every system extracted only visible HTML content. JSON-LD, hidden Microdata, and hidden RDFa were all ignored. A few other points to flag, and some questions worth testing next:- Pages that add JSON-LD often change other things at the same time (e.g. links, content, technical fixes). We can’t fully separate schema from these kinds of co-occurrences.

- We pooled all schema types together. Article, FAQ, Product, HowTo, Organization. It’s possible some types help more than others. This may be worth digging into.

- We measured 30 days post-treatment. If JSON-LD has a slow-burn effect, a 60- or 90-day window might reveal more growth.

- We studied JSON-LD—the most widely used schema format. Other formats exist (Microdata and RDFa), but we haven’t yet tested them.

- We only looked at schema in the page’s HTML, not schema injected via JavaScript. AI crawlers appear to treat the two differently. ¹

- The small AI Overview decline is real but unexplained. Treated pages dropped about 4.6% more than matched controls, and we don’t know why. A follow-up study could look at whether specific schema types or specific content types account for the gap.

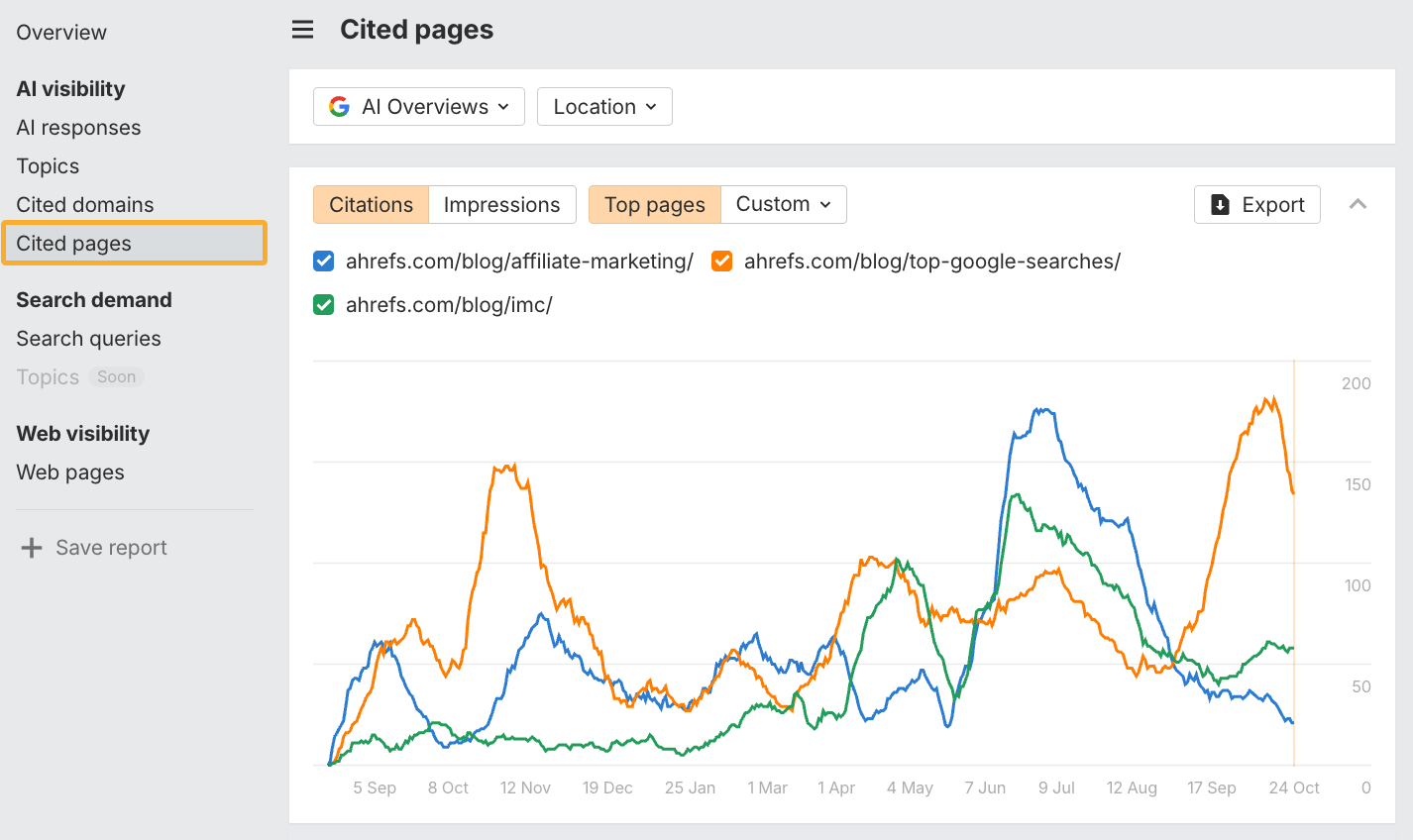

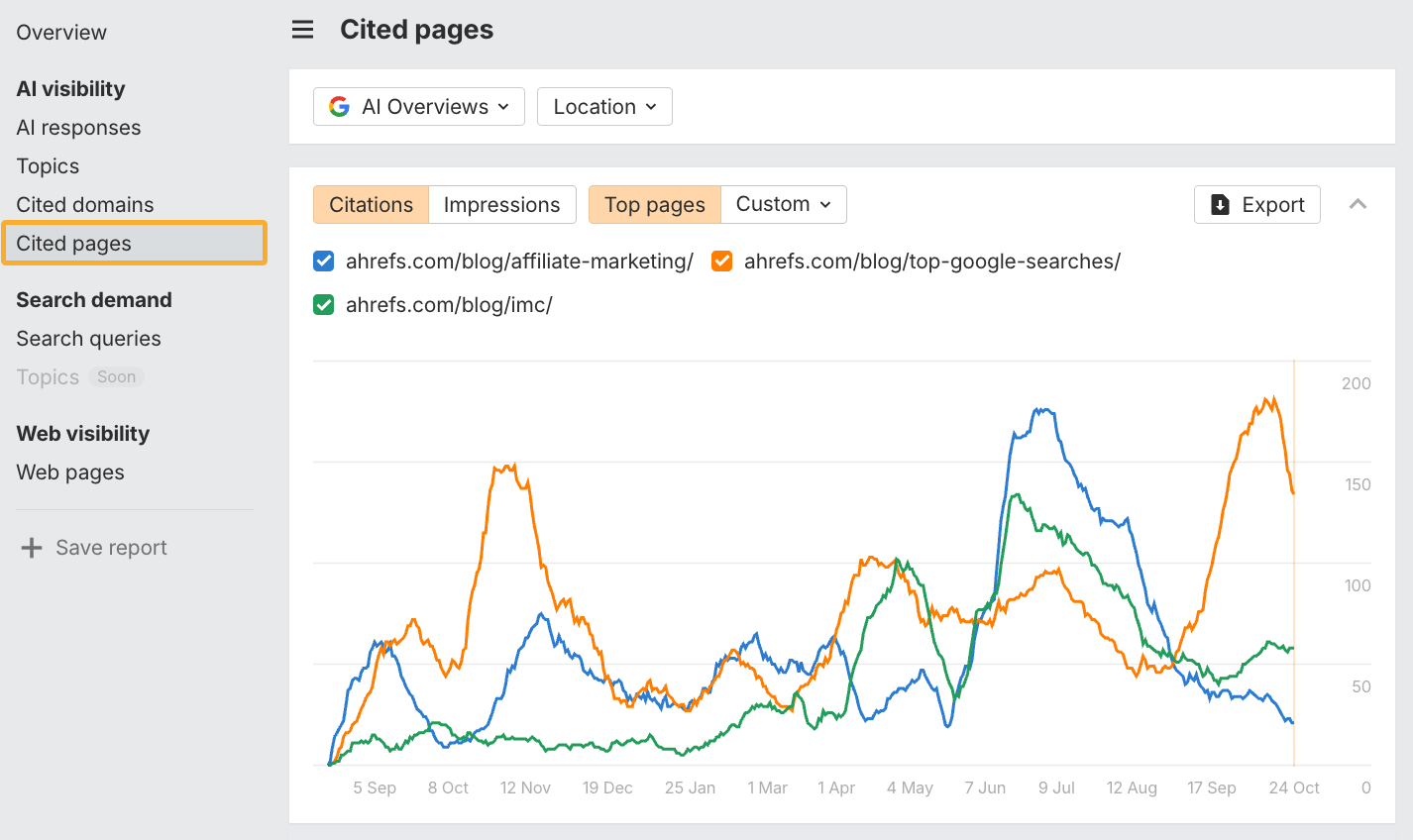

- Pick 5–10 test pages where you plan to add JSON-LD. Ideally pages already getting some AI citations, so you have a baseline (pages with zero citations make it harder to tell whether schema did nothing, or whether the page just wasn’t going to get cited either way). You can check this in the Cited Pages report.

- Pick 5–10 control pages with similar citation levels that you’re not adding schema to. This is what separates “schema did something” from “AI Overviews shifted for everyone that month.”

- Record baseline citations for both groups across AI Overview, AI Mode, and ChatGPT in Brand Radar. Just apply URL filters to isolate those citation numbers.

- Add schema to your test pages and note the date. Don’t change anything else on those pages during the test window.

- Compare both groups after 30 days (or longer if you can). The question is: “did treated pages go up more than control pages did?”

Source link